Key Takeaways

- AI usage data reveals real skill gaps: Tracking how teams interact with AI tools helps you identify where employees lack workflow knowledge, prompt skills, or confidence using AI in their daily work.

- Team-level insights work better than individual monitoring: Analyzing adoption patterns across teams avoids micromanagement and creates a safer environment for experimentation and learning.

- AI adoption does not automatically mean productivity gains: Employees may use AI tools frequently, but without structured training and workflow integration, usage rarely translates into better outcomes.

- Most organizations measure the wrong signals: Metrics like licenses, logins, or time spent in AI tools provide limited insight. More useful indicators include cycle time, quality improvements, rework rates, and time saved per task.

- Usage patterns can expose both skill gaps and governance gaps: Low adoption, uneven usage across similar roles, or shallow AI usage often indicate training needs. Shadow AI or risky usage patterns usually point to missing policies and guardrails.

- A simple AI tool taxonomy helps you analyze usage patterns: Categorizing tools by function such as writing, coding, research, documentation, or creative generation makes it easier to connect AI usage with team outcomes.

- A structured framework makes AI upskilling operational: The process involves defining measurement boundaries, establishing a baseline, turning usage signals into training hypotheses, and launching targeted learning programs.

- Targeted training is more effective than generic AI education: Role-specific upskilling programs that focus on real workflows lead to stronger adoption and measurable improvements in productivity.

- A 30-day pilot is enough to validate the strategy: Organizations can start small by establishing guardrails, running focused workshops, measuring early outcomes, and then scaling successful programs.

- Workforce analytics platforms simplify AI adoption analysis: Tools like Flowace automatically track application usage and workflow patterns, helping organizations turn AI usage insights into practical upskilling strategies.

- The ultimate goal is better work outcomes, not more AI usage: Successful AI adoption focuses on improving productivity, quality, and decision making rather than simply increasing the amount of time employees spend using AI tools.

Most organizations are encouraging employees to experiment with AI tools, yet many leaders struggle with a practical question: how do you turn employee AI usage into real skill development without creating a culture of surveillance? Simply knowing that employees are using AI does not mean those tools are improving productivity, decision-making, or work quality.

A more effective approach is to look at employee AI tool usage patterns at the team level rather than monitoring individuals. Data can reveal which tools teams rely on, how consistently they use them, and whether that usage aligns with stronger outcomes. When analyzed correctly, these insights highlight capability gaps, workflow inefficiencies, and opportunities where targeted training could make a meaningful difference.

Research from McKinsey & Company suggests that companies gain the most value from AI when adoption is paired with structured learning. Instead of hovering over employees or tracking every interaction, leaders can use aggregated usage insights to design focused upskilling initiatives that strengthen team capabilities.

This guide explains how to use AI tool usage data to plan team upskilling programs without micromanaging employees, helping organizations turn AI adoption into measurable improvement in how work actually gets done.

What Is Defined As AI Tool Usage Data In The Workplace?

AI tool usage data is the set of behavioral signals that shows how your team interacts with AI tools at work. It typically includes:

- Which AI tools are used and by which teams

- How often they are used (daily, weekly, occasional)

- Time-on-tool or session frequency

- Tool category (chatbot, coding assistant, image generation, research, meeting notes)

- Context signals like app or website usage patterns around that AI for business (for example, “AI for drafting” followed by “docs editing”)

This data matters because surveys and self-reporting often miss what is actually happening with the AI tools. People forget. People guess. Or they stay quiet when they are unsure.

Why Do Teams Struggle To Use AI Usage Data for Training?

AI usage is growing fast, but it is uneven. For example, Gallup reports daily workplace AI use of 12% and frequent use of 26% in late 2025, showing that “AI everywhere” depends heavily on role and company context.

Also, adoption without training is common. A Clutch survey found 74% of workers use AI daily but only 33% receive training, which is exactly why usage data can guide upskilling investment

In most workplaces, employees are often curious and willing to experiment. But the problem is, AI adoption inside organizations tends to be unstructured and poorly measured. So, translating this data into meaningful improvements is hard work.

There are several reasons behind this issue:

- People do not know which tools are approved, so they either avoid AI or use it quietly.

- Teams confuse “using AI” with “getting value,” so adoption rises but impact stays fuzzy.

- Training is generic. It does not map to role-specific workflows, so behavior does not change.

- Managers overcorrect and start tracking individuals, which creates fear and metric gaming.

- People copy-paste sensitive data into public tools because no one taught safe patterns.

- The organization measures the wrong thing (licenses, logins) instead of outcomes (cycle time, quality, time saved).

- Data quality issues create “false signals,” like idle-time false positives during meetings or deep reading, which makes the analytics look like a performance scorecard.

How To Spot AI Skill Gaps Using AI Usage Analytics For Your Team?

You do not need a sophisticated AI model to identify skill gaps across your teams. In most cases, a few clear patterns in usage data will tell you what is actually happening. When you review AI usage analytics with the right lens, you can quickly see whether the issue is a training problem, a workflow problem, or a governance problem.

Start by looking for patterns that usually signal a skill gap.

Low Adoption In Places Where AI Should Clearly Help

If a team performs tasks that AI can easily accelerate but usage remains low, there is likely a knowledge or confidence gap.

For example, a support team may spend hours writing long responses to customer queries every day. If AI tools that can draft replies or summarize tickets are available but rarely used, the problem is not the tool itself. It usually means people do not know how to integrate it into their daily workflow.

Uneven Adoption Within The Same Role

Sometimes you will notice that one team or pod uses AI consistently while another group with the same responsibilities barely touches it.

This is a strong signal that training or knowledge sharing is uneven. Some managers or team leads may have encouraged experimentation and shared best practices, while others never introduced those workflows to their teams.

When adoption varies widely across identical roles, the issue is usually training reach and enablement, not capability.

Shallow Usage Patterns

Another common signal is when employees use AI tools only for very basic tasks.

For instance, people may rely on AI to rewrite emails or polish sentences but rarely use it for deeper work such as synthesizing research, structuring complex problems, or generating outlines for analysis. This pattern often indicates that employees understand the surface capabilities of AI but have not developed stronger prompt strategies or workflow integration skills.

In other words, they are using AI, but they are not using it effectively.

High Usage With Little Improvement In Outcomes

High activity inside an AI tool does not automatically translate into better results.

If usage is high but key metrics such as cycle time, turnaround speed, or work quality remain unchanged, the issue is rarely adoption. It is usually a workflow or skill problem. Employees may be experimenting with prompts but not integrating AI in a way that actually improves the task.

This is where targeted training can help. The goal is not to increase usage further but to improve how AI is used within specific workflows.

In some cases, the data highlights a governance gap. In such situations, you can:

Shadow AI Usage

You may notice AI activity appearing in browser or application logs even though those tools are not officially approved.

This is a classic sign of shadow AI. Employees are clearly trying to use AI to get work done, but the organization has not provided clear guidance on which tools are allowed. In this case, the problem is not simply training. It is a policy and enablement issue.

People will find tools that help them work faster. Your job is to provide approved options and clear guidelines so they do not need to improvise.

Monitor Risky Behavior In Sensitive Workflows

Another signal to watch for is sudden spikes in AI usage around sensitive work such as legal review, financial analysis, or customer data handling.

Without clear policies, employees may experiment with AI in places where data protection rules should apply. These patterns do not necessarily indicate bad intent. They usually indicate that people were never taught where AI is safe to use and where extra caution is required.

When you step back and look at these patterns collectively, AI usage analytics becomes less about monitoring employees and more about diagnosing capability gaps across teams. The goal is to identify where people need better training, where workflows need improvement, and where governance needs to catch up with real behavior.

A Practical AI Tool Map You Can Start With

When you begin analyzing AI usage across teams, it helps to start with a simple tool taxonomy. You do not need a perfect framework at the beginning. What you need is a practical map that helps you understand what types of tools people are using and why.

Think of this as a working structure that you can refine over time as adoption grows and new tools enter your stack.

Writing and Editing

For tasks such as drafting content, rewriting text, summarizing information, or polishing communication, tools like ChatGPT are commonly used. Teams often rely on these tools to speed up documentation, marketing copy, internal communication, and customer responses.

Coding Assistants

Engineering teams frequently use coding copilots to generate code suggestions, troubleshoot errors, or accelerate routine development tasks. A well-known example is GitHub Copilot, which integrates directly into development environments and helps developers work faster without leaving their workflow.

Research Assistants

For tasks that involve synthesizing information, brainstorming ideas, or exploring complex topics, many teams turn to tools like Claude. These tools are often used to structure research, summarize large documents, or explore possible approaches before deeper analysis begins.

Knowledge and Documentation

Teams that manage internal knowledge bases or collaborative documentation often rely on platforms with built-in AI support, such as Notion AI. These tools help organize information, generate summaries, and streamline documentation workflows.

Creative Generation

For visual ideation and creative assets, tools like Midjourney are widely used. Design and marketing teams often use them to generate concepts, explore visual directions, or produce draft creative assets quickly.

A Step-By-Step Framework To Turn AI Usage Monitoring Into A Team Upskilling Strategy

If you want AI adoption to translate into real capability, you need a simple operational framework. The goal of this framework is to use usage insights to identify skill gaps and design focused training that improves outcomes.

Step 1: Define What You Will and Will Not Measure

Before collecting any data, decide what your measurement approach looks like. Writing this down early helps you build trust and prevents employee monitoring from drifting into micromanagement.

At a minimum, you should establish three principles.

- You measure team-level adoption patterns, including how often AI tools are used and which workflow categories they support.

- You do not review individual prompts by default. The purpose is to understand how teams work, not to inspect how individuals think.

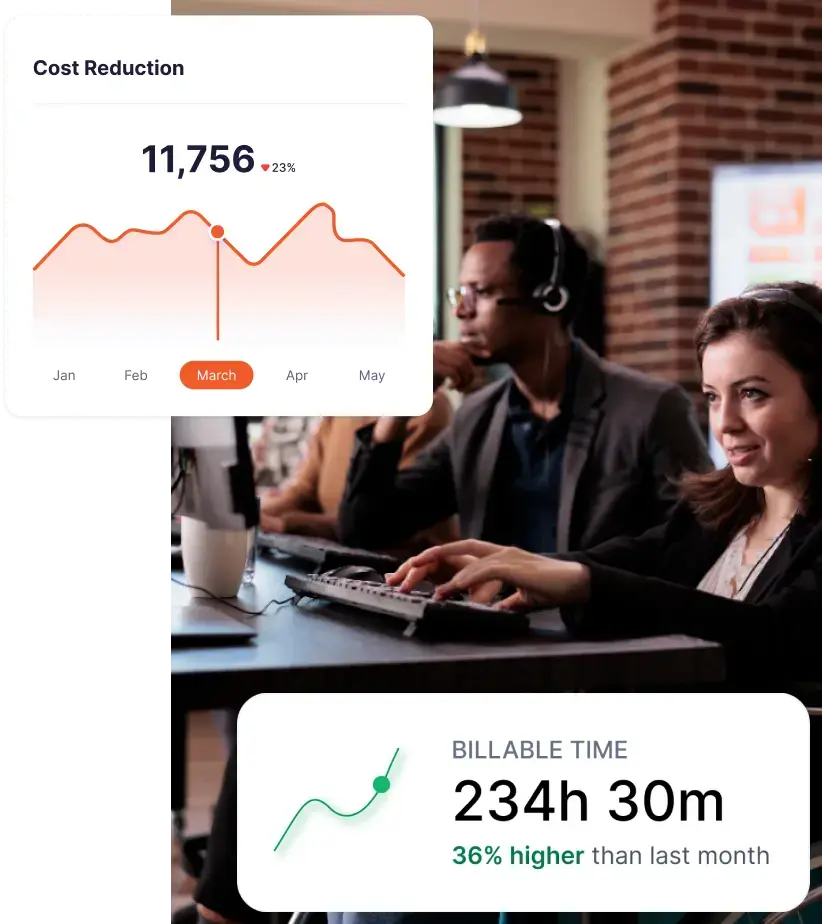

- You evaluate AI impact using business outcomes rather than activity metrics. Metrics such as cycle time, quality improvements, or response speed matter far more than time spent inside a tool.

Step 2: Build a Baseline Using Workforce Analytics for AI Tools

You cannot prove improvement if you never establish a baseline.

Start by capturing a small set of metrics that show how AI tools are currently used and how teams perform before training begins.

Focus on signals that are simple but meaningful:

- Adoption rate: The percentage of team members who use at least one AI tool each week

- Frequency of usage: Number of sessions per week or number of active days

- Time on tool as a supporting signal, not a primary KPI

- Role-specific outcomes, such as cycle time, backlog size, QA rework rates, reply time, or content output

The most useful baseline metrics are always tied to real work outcomes. Many organizations rely on vendor and industry guidance when choosing these indicators.

Step 3: Translate Usage Patterns Into Training Hypotheses

This is where many teams stop too early. They collect usage data but never convert it into actionable learning programs.

A simple “signal to hypothesis” worksheet can help you bridge that gap.

For example:

- Signal: Marketing operations teams rarely use AI tools

- Hypothesis: They may not know how to use AI for reporting analysis or data summarization

- Training intervention: A focused 60-minute workshop combined with prompt templates and open office hours

- Expected impact: Faster reporting cycles and fewer revision requests

You can strengthen this process by introducing basic measurement ideas such as control groups or phased rollouts.

Step 4: Create Targeted Programs Instead of Blanket Training

Generic AI training rarely changes behavior. Employees need guidance that connects directly to the workflows they handle every day.

A more effective strategy is to design role-specific upskilling tracks.

For example:

- AI for customer support replies: Focus on summarizing tickets, controlling tone, and generating escalation templates.

- AI for engineering debugging: Cover automated test generation, structured debugging prompts, and code review checklists.

- AI for marketing content operations: Teach how to generate briefs, build structured outlines, and repurpose long-form content across channels.

Frameworks from organizations such as Boston Consulting Group often recommend building AI capability in layers. Start with basic tool literacy, then move toward workflow integration and advanced problem solving.

Step 5: Roll Out in 30 Days With a Realistic Cadence

You do not need a six-month transformation program to start seeing results. A focused 30-day rollout is often enough to validate the approach.

A practical cadence might look like this:

Week 1: Establish your baseline metrics, create a simple AI tool map, and publish usage guardrails.

Week 2: Run two role-specific workshops and release a shared prompt template library.

Week 3: Host team office hours and provide managers with a short coaching guide so they can reinforce new workflows.

Week 4: Measure early impact, document what worked, and share learnings before expanding the program to another team.

This approach keeps the process lightweight while still generating measurable insights. Many enterprise AI adoption playbooks recommend the same pattern. Start small, measure outcomes carefully, and scale only after you see real improvement.

Once you start monitoring AI usage patterns, the next step is turning that visibility into something practical. You want a small set of tools and metrics that help you connect AI activity to real outcomes, not just tool engagement.

Where Do Workforce Analytics Platforms Help?

You can run this entire process manually if you want. But involves pulling browser logs, application data, workflow metrics, and multiple reports across different systems.

This is where workforce analytics platforms can simplify things. Employee productivity software and digital activity analytics tools automatically capture patterns in application and website usage. Instead of building your own tracking structure, you get a clearer view of how teams actually work across tools and workflows.

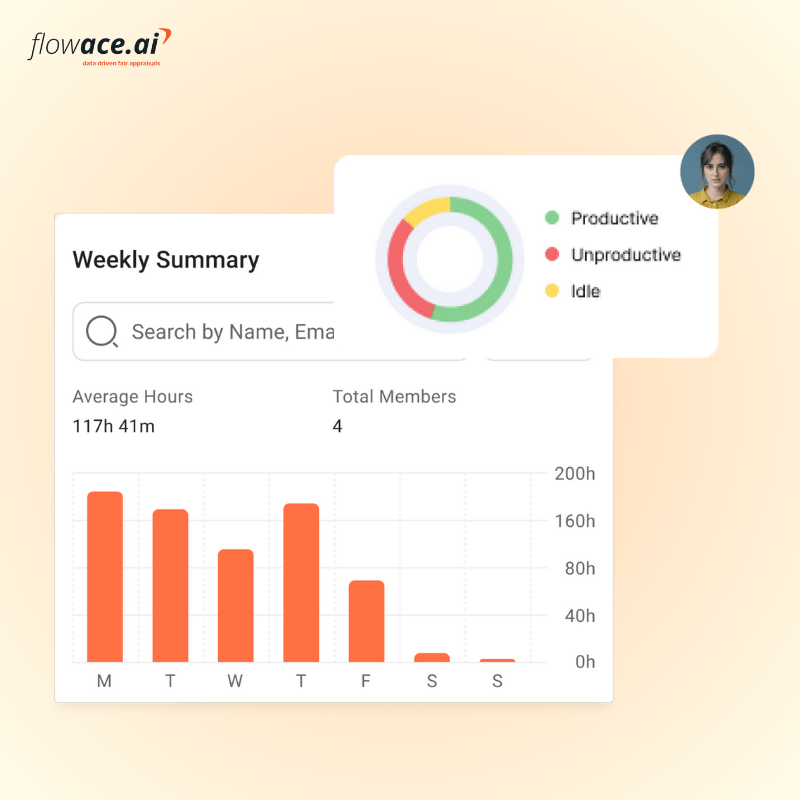

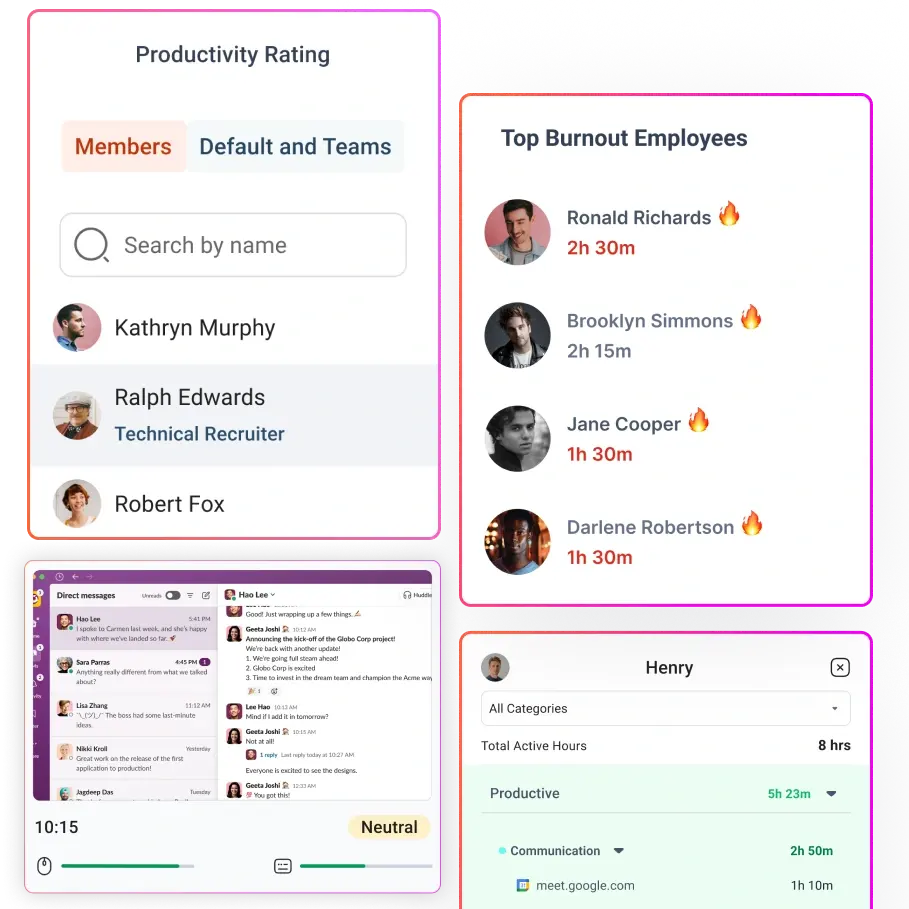

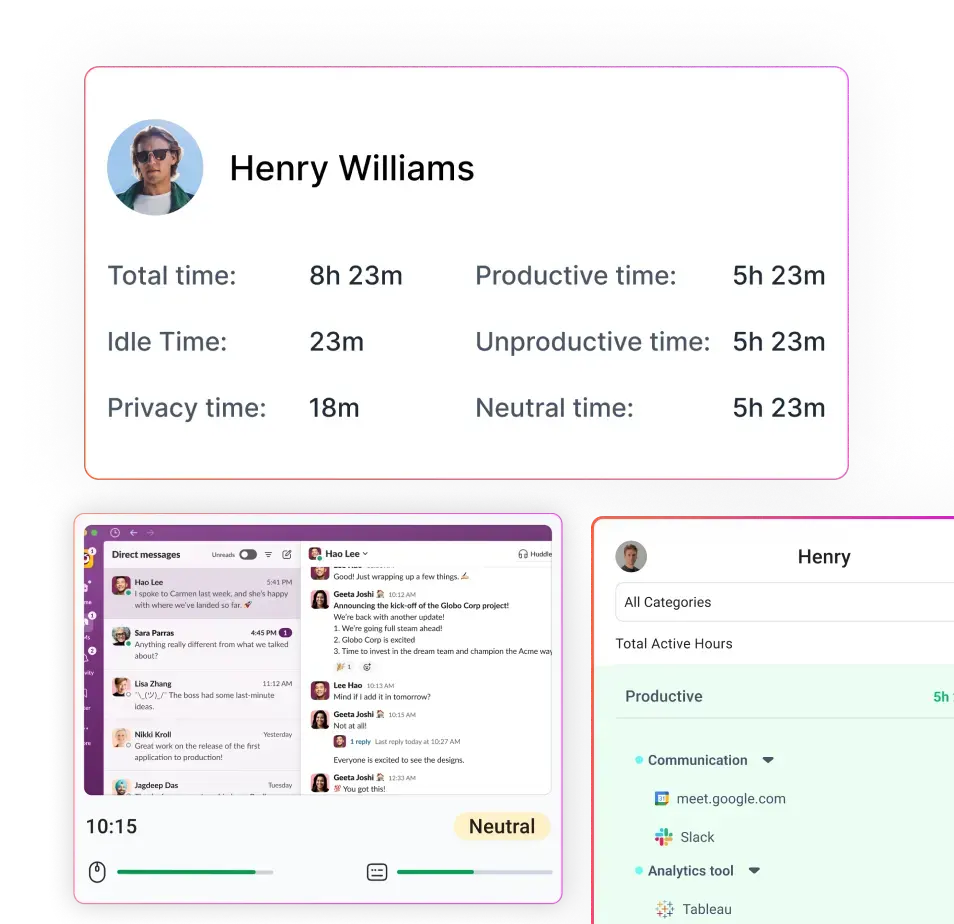

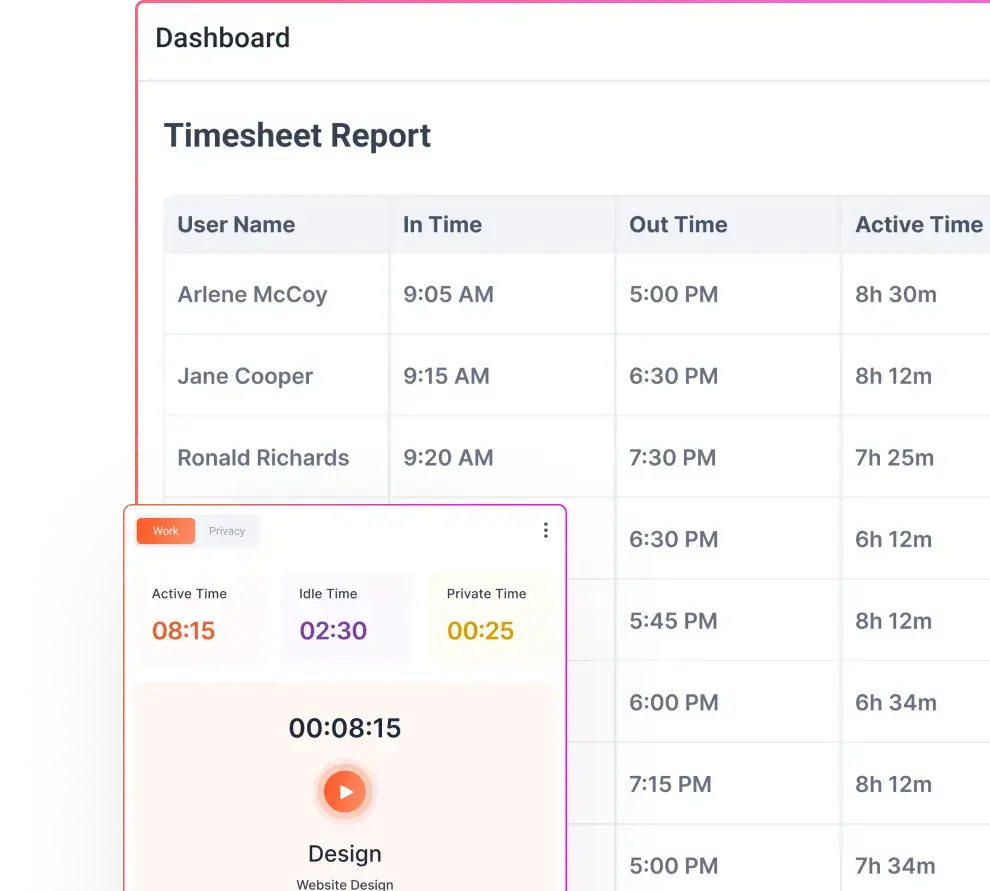

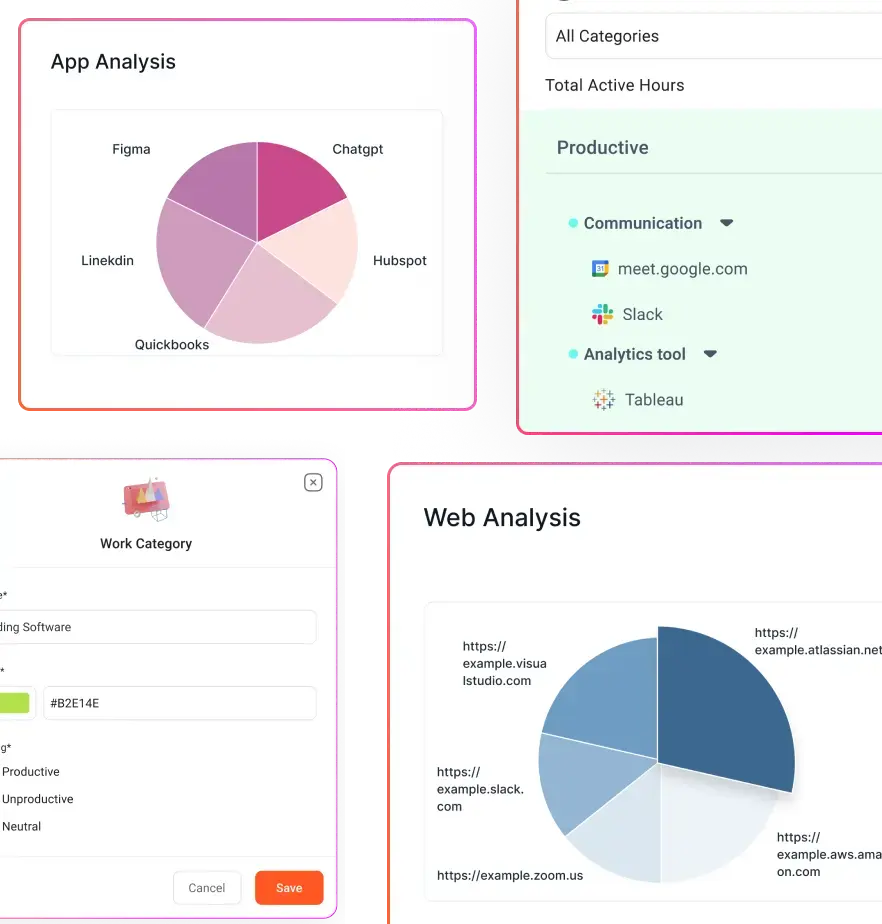

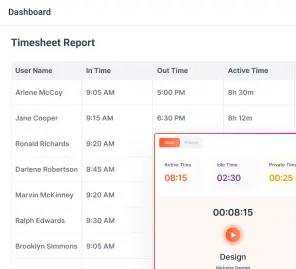

For example, Flowace is an AI-powered workforce analytics platform that tracks application and website usage and classifies activity as productive, unproductive, or neutral. The platform also includes controls such as Privacy Mode so employees can protect sensitive work sessions.

When you look at this data at the team level, it becomes easier to identify patterns such as which departments use AI tools regularly and which ones rarely touch them. That visibility helps you plan training based on real workflows instead of assumptions or anecdotal feedback.

Flowace offers a seven-day free trial and several pricing tiers depending on the level of analytics and features your organization needs.

Flowace pricing plan ranges from:

- Basic Plan:

- About $2.99 per user per month when billed monthly, or about $1.99 per user per month on an annual plan.

- The Basic plan includes features such as screenshots, silent tracking, attendance tracking, project and task management, and configurable idle timeout settings.

- Standard Plan:

- About $4.99 per user per month when billed monthly, or about $3.99 per user per month annually.

- The Standard plan adds application and website tracking, productivity ratings, approval workflows, raw activity logs, and reporting dashboards.

- Premium Plan:

- About $10.00 per user per month when billed monthly, or about $6.98 per user per month when billed annually.

- The Premium plan introduces deeper analytics features such as keyboard and cursor activity insights, integrations with other tools, billing and invoicing capabilities, internet connectivity reporting, and single sign on support.

- Enterprise Plan:

- Custom pricing depending on company requirements.

If you want to operationalize AI adoption in organizations with clearer visibility and fewer manual reports, you can start a free trial or book a free demo of Flowace near the end of your pilot, once you have your baseline and guardrails written.

FAQs

1. How can you measure AI adoption in the workplace without creating a surveillance culture?

The most effective approach is to measure team level adoption patterns rather than individual behavior. Focus on signals such as how often teams use approved AI tools, how usage varies across roles, and whether it correlates with improved outcomes like faster cycle times or better work quality.

You should also publish clear guardrails explaining what data is collected and why. Avoid collecting prompt content or monitoring individual activity unless there is a clear and justified reason.

2. What metrics actually reflect meaningful AI adoption?

Good AI adoption metrics connect tool usage to real work outcomes. A small but meaningful set of metrics usually includes:

- Adoption rate: The percentage of team members using at least one AI tool during a given time period

- Usage frequency: How often teams interact with AI tools each week

- Time saved per task: Measured through simple before and after comparisons

- Quality indicators: Role specific metrics such as fewer revisions, reduced QA defects, or faster response times

These metrics help you understand whether AI is improving work rather than simply increasing tool usage.

3. How can AI usage analytics help you identify skill gaps across teams?

AI usage patterns often reveal capability gaps. For example:

- Low adoption in workflows where AI should clearly help

- Large differences in usage across teams that perform the same role

- High tool usage without measurable improvements in productivity or quality

These signals typically indicate that employees need more guidance on how to integrate AI into their day-to-day workflows.

4. How do you prevent AI analytics from turning into micromanagement?

Start by analyzing patterns across teams instead of scoring individual employees. The goal of AI analytics should be to improve workflows and training, not to rank people.

Use aggregated insights to design coaching sessions, role specific workshops, and shared templates. If deeper analysis is needed, make it opt in and clearly communicate how the data will be used.

5. Is monitoring employee AI usage legal?

The legality of workplace monitoring depends on local laws and regulations. However, most regulatory frameworks emphasize three core principles: necessity, proportionality, and transparency.

Organizations should clearly explain what data is collected, why it is needed, and how it will be used. It is also important to review applicable guidance from regulators and consult legal counsel before implementing monitoring programs.

6. Should companies track employee prompts and AI conversations?

In most cases, prompt content should not be collected by default. Tool level usage data and workflow metrics are usually sufficient for understanding adoption patterns.

Prompt or conversation content should only be captured when there is a clear legal basis, strict access controls, and well defined governance policies.

7. What is shadow AI and why should organizations care about it?

Shadow AI refers to employees using unapproved AI tools without formal organizational guidance.

This can create risks such as data leakage, compliance violations, or inconsistent workflows. At the same time, shadow AI is often a signal that employees are trying to solve real problems. It usually indicates that the organization needs clearer policies and approved alternatives.

8. How can you prove that AI training improved productivity?

The best way to validate training impact is through a controlled pilot program. Run a training initiative with one team while keeping another similar group as a comparison.

Then measure business metrics such as response time, cycle time, quality scores, or rework rates before and after the program. This allows you to evaluate whether AI training produced measurable improvements.

9. Why is AI adoption often uneven across teams?

AI adoption varies widely because different roles have different workflows. Some teams can integrate AI naturally into their daily tasks, while others need more structured guidance.

Other common factors include generic training programs, unclear policies about approved tools, and uncertainty about safe AI usage. These factors often lead employees to either avoid AI or use it quietly without sharing best practices.